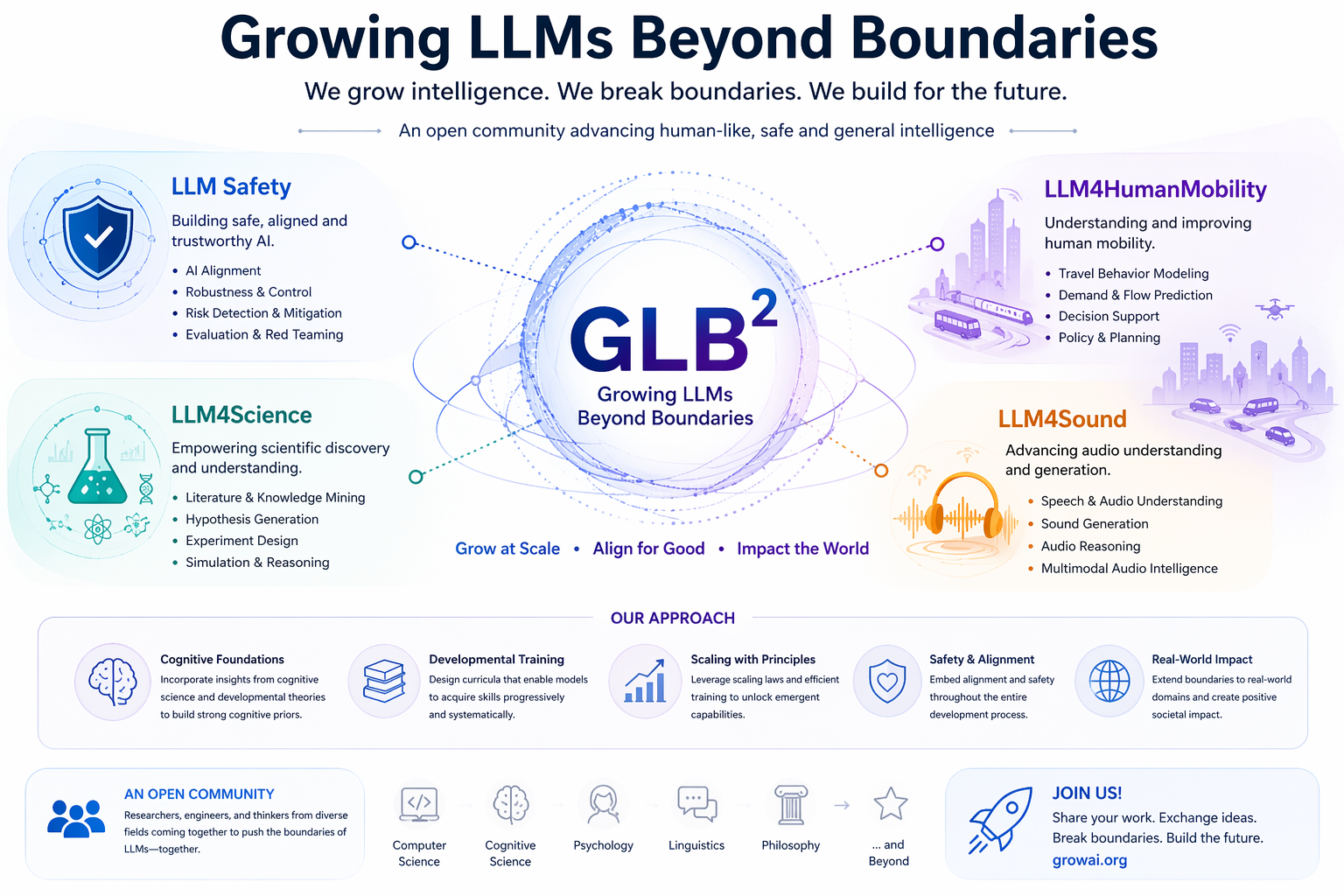

We are GLB² (Growing LLMs Beyond Boundaries). Human intelligence does not arise from mere data accumulation, but from structured cognitive priors that unfold through a coherent developmental trajectory; analogously, we view LLMs not as static systems optimized for specific downstream tasks, but as evolving frameworks capable of systematically expanding their capability boundaries. Current paradigms such as fine-tuning and prompting primarily adapt models to tasks like QA, coding, and reasoning, yet remain limited to surface-level alignment rather than enabling deep, cross-task and cross-modal generalization.

GLB² aims to extend LLMs beyond these conventional limits into domains such as LLM Safety, LLM4Science, LLM4HumanMobility, and LLM4Sound, where performance alone is insufficient without structured knowledge, long-horizon reasoning, and interaction with complex environments. In this view, downstream tasks become probes for measuring underlying cognitive competence rather than endpoints of optimization.

We advocate for a unified framework that integrates developmental training with scalable learning, enabling models to expand capability, generalization, and safety in tandem. We welcome all domain researchers to join the GLB² open community—a platform to showcase work, exchange ideas, and collaboratively push the boundaries of LLMs toward impactful, real-world applications.

We come together as GLB², an open-source community uniting researchers from computer science, engineering, biology, chemistry, cognitive science and beyond. Our ongoing research focuses on the following areas:

- LLM Safety: Understanding refusal boundaries, jailbreak vulnerabilities, and memory editing in aligned language models, while developing stronger alignment mechanisms for robust and secure deployment.

- LLM for Genomics Discovery: Building efficient and low-cost genomic foundation models with improved robustness, adaptation, and adversarial evaluation for scientific discovery in biology.

- LLM for Human Mobility: Advancing spatio-temporal reasoning with language models through efficient temporal tokenization and structured modeling of human mobility patterns.

- LLM for Multi-Modal Understanding: Enabling reliable reasoning across text, vision, speech, and video through multimodal question answering, retrieval, and interaction in complex real-world environments.

Latest Research

Mind the Inconspicuous: Revealing the Hidden Weakness in Aligned LLMs' Refusal Boundaries

Read PaperFast and Low-Cost Genomic Foundation Models via Outlier Removal

Read PaperST-MoE-BERT: A Spatial-Temporal Mixture-of-Experts Framework for Long-Term Cross-City Mobility Prediction

Read PaperGenoArmory: A Unified Evaluation Framework for Adversarial Attacks on Genomic Foundation Models

Read PaperJoin Our Community

Connect with researchers and contribute to our open-source initiative

Join us on Slack